Data Ingestion & Knowledge Sources

100+ Native Connectors – SharePoint, Salesforce, ServiceNow, Confluence, databases, file shares, Slack, websites merged into one indexOCR & Structured Data – Indexes scanned docs, intranet pages, knowledge articles, multimedia contentReal-Time Sync – Incremental crawls, push APIs, scheduled syncs keep content fresh

✅ Auto-Indexing – Points at files, indexes unstructured data automatically without manual setup✅ Auto-Sync – Connected repositories sync automatically, document changes reflected almost instantlyFile Formats – Supports PDF, DOCX, PPT, TXT and common enterprise formats⚠️ Limited Scope – No website crawling or YouTube ingestion, narrower than CustomGPTEnterprise Scale – Handles large corporate data sets, exact limits not published

1,400+ file formats – PDF, DOCX, Excel, PowerPoint, Markdown, HTML + auto-extraction from ZIP/RAR/7Z archivesWebsite crawling – Sitemap indexing with configurable depth for help docs, FAQs, and public contentMultimedia transcription – AI Vision, OCR, YouTube/Vimeo/podcast speech-to-text built-inCloud integrations – Google Drive, SharePoint, OneDrive, Dropbox, Notion with auto-syncKnowledge platforms – Zendesk, Freshdesk, HubSpot, Confluence, Shopify connectorsMassive scale – 60M words (Standard) / 300M words (Premium) per bot with no performance degradation

Atomic UI Components – Drop-in components for search pages, support hubs, commerce sites with generative answersNative Platform Integrations – Salesforce, Sitecore with AI answers inside existing toolsREST APIs – Build custom chatbots, virtual assistants on Coveo's retrieval engine

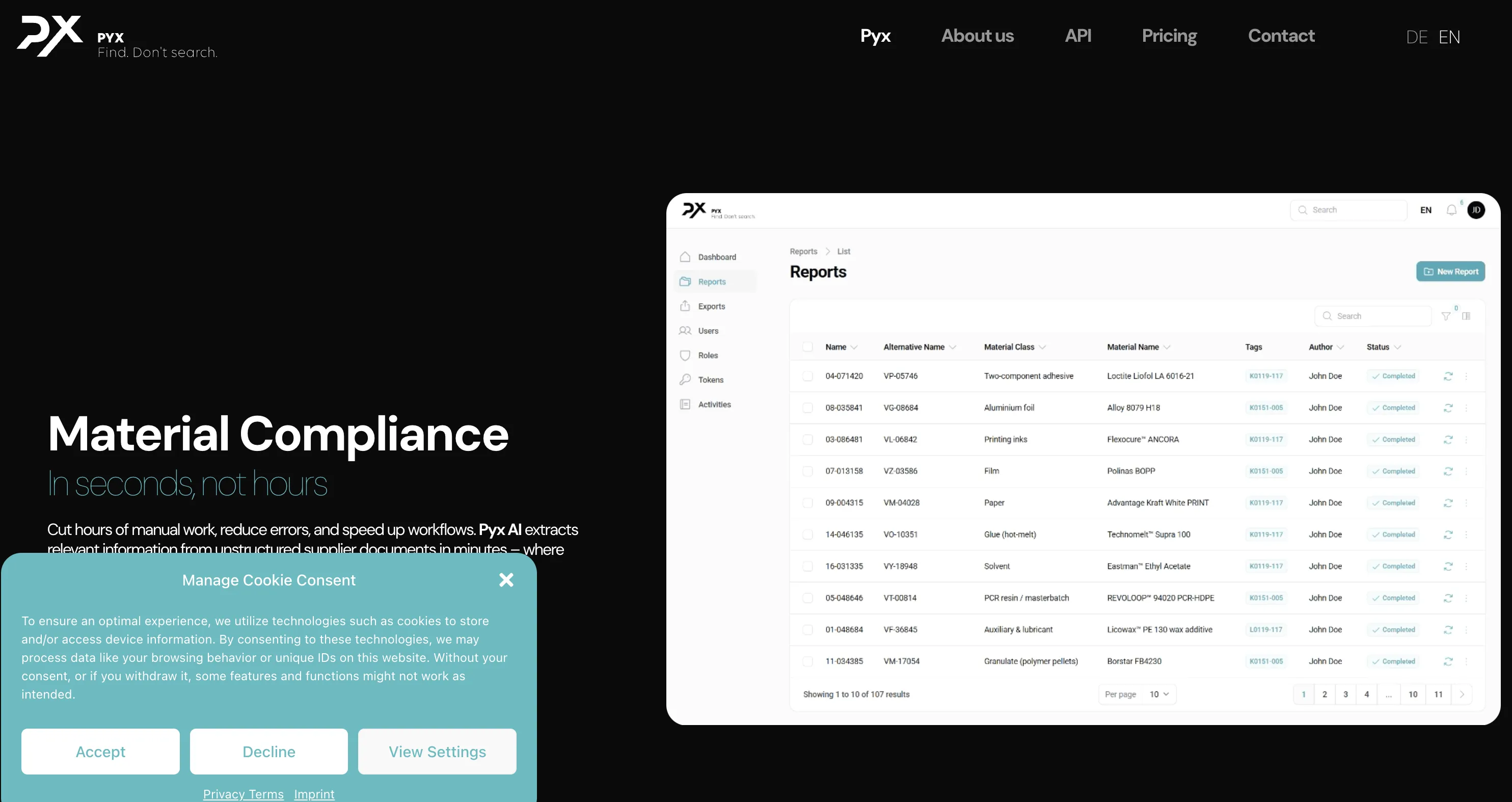

⚠️ Standalone Only – Own chat/search interface, not a "deploy everywhere" platform⚠️ No External Channels – No Slack bot, Zapier connector, or public APIWeb/Desktop UI – Users interact through Pyx's interface, minimal third-party chat synergyCustom Integration – Deeper integrations require custom dev work or future updates

Website embedding – Lightweight JS widget or iframe with customizable positioningCMS plugins – WordPress, WIX, Webflow, Framer, SquareSpace native support5,000+ app ecosystem – Zapier connects CRMs, marketing, e-commerce toolsMCP Server – Integrate with Claude Desktop, Cursor, ChatGPT, WindsurfOpenAI SDK compatible – Drop-in replacement for OpenAI API endpointsLiveChat + Slack – Native chat widgets with human handoff capabilities

Uses Relevance Generative Answering (RGA)—a two-step retrieval plus LLM flow that produces concise, source-cited answers.

Respects permissions, showing each user only the content they’re allowed to see.

Blends the direct answer with classic search results so people can dig deeper if they want.

Conversational Search – Context-aware Q&A over enterprise documents with follow-up questions⚠️ Internal Focus – Designed for knowledge management, no lead capture or human handoffMulti-Language – Likely supports multiple languages, though not a headline feature⚠️ Basic Analytics – Stores chat history, fewer business insights than customer-facing tools

✅ #1 accuracy – Median 5/5 in independent benchmarks, 10% lower hallucination than OpenAI✅ Source citations – Every response includes clickable links to original documents✅ 93% resolution rate – Handles queries autonomously, reducing human workload✅ 92 languages – Native multilingual support without per-language config✅ Lead capture – Built-in email collection, custom forms, real-time notifications✅ Human handoff – Escalation with full conversation context preserved

Atomic components are fully styleable with CSS, making it easy to match your brand’s look and feel.

You can tweak answer formatting and citation display through configs; deeper personality tweaks mean editing the prompt.

⚠️ Minimal Branding – Logo/color tweaks only, designed as internal tool not white-label⚠️ No Embedding – Standalone interface, no domain-embed or widget options availablePyx UI Only – Look stays "Pyx AI" by design, public branding not supportedSecurity Focus – Emphasis on user management and access controls over theming

Full white-labeling included – Colors, logos, CSS, custom domains at no extra cost2-minute setup – No-code wizard with drag-and-drop interfacePersona customization – Control AI personality, tone, response style via pre-promptsVisual theme editor – Real-time preview of branding changesDomain allowlisting – Restrict embedding to approved sites only

Azure OpenAI GPT – Primary models via Azure OpenAI for high-quality generationBring Your Own LLM – Relevance-Augmented Passage Retrieval API supports custom modelsAuto-Tuning – Handles model tuning, prompt optimization; API override available

⚠️ Undisclosed Model – Likely GPT-3.5/GPT-4 but exact model not publicly documented⚠️ No Model Selection – Cannot switch LLMs or configure speed vs accuracy tradeoffs⚠️ Single Configuration – Every query uses same model, no toggles or fine-tuningClosed Architecture – Model details, context window, capabilities hidden from users intentionally

GPT-5.1 models – Latest thinking models (Optimal & Smart variants)GPT-4 series – GPT-4, GPT-4 Turbo, GPT-4o availableClaude 4.5 – Anthropic's Opus available for EnterpriseAuto model routing – Balances cost/performance automaticallyZero API key management – All models managed behind the scenes

Developer Experience ( A P I & S D Ks)

REST APIs & SDKs – Java, .NET, JavaScript for indexing, connectors, queryingUI Components – Atomic and Quantic components for fast front-end integrationEnterprise Documentation – Step-by-step guides for pipelines, index management

⚠️ No API – No open API or SDKs, everything through Pyx interface⚠️ No Embedding – Cannot integrate into other apps or call programmaticallyClosed Ecosystem – No GitHub examples, community plug-ins, or extensibility optionsTurnkey Only – Great for ready-made tool, limits deep customization or extensions

REST API – Full-featured for agents, projects, data ingestion, chat queriesPython SDK – Open-source customgpt-client with full API coveragePostman collections – Pre-built requests for rapid prototypingWebhooks – Real-time event notifications for conversations and leadsOpenAI compatible – Use existing OpenAI SDK code with minimal changes

Pairs keyword search with semantic vector search so the LLM gets the best possible context.

Reranking plus smart prompts keep hallucinations low and citations precise.

Built on a scalable architecture that handles heavy query loads and massive content sets.

Real-Time Answers – Serves accurate responses from internal documents, sparse public benchmarksAuto-Sync Freshness – Connected repositories keep retrieval context always current automatically⚠️ Limited Transparency – No anti-hallucination metrics or advanced re-ranking details publishedCompetitive RAG – Likely comparable to standard GPT-based systems on relevance control

Sub-second responses – Optimized RAG with vector search and multi-layer cachingBenchmark-proven – 13% higher accuracy, 34% faster than OpenAI Assistants APIAnti-hallucination tech – Responses grounded only in your provided contentOpenGraph citations – Rich visual cards with titles, descriptions, images99.9% uptime – Auto-scaling infrastructure handles traffic spikes

Customization & Flexibility ( Behavior & Knowledge)

Fine-tune which sources and metadata the engine uses via query pipelines and filters.

Integrates with SSO/LDAP so results are tailored to each user’s permissions.

Developers can tweak prompt templates or inject business rules to shape the output.

✅ Auto-Sync Updates – Knowledge base updated without manual uploads or scheduling⚠️ No Persona Controls – AI voice stays neutral, no tone or behavior customization✅ Access Controls – Strong role-based permissions, admins set document visibility per userClosed Environment – Great for content updates, limited for AI behavior or deployment

Live content updates – Add/remove content with automatic re-indexingSystem prompts – Shape agent behavior and voice through instructionsMulti-agent support – Different bots for different teamsSmart defaults – No ML expertise required for custom behavior

Enterprise Licensing – Pricing based on sources, query volume, features99.999% Uptime – Scales to millions of queries, regional data centersAnnual Contracts – Volume tiers with optional premium support

Seat-Based Pricing – ~$30 per user per month, predictable monthly costs✅ Cost-Effective Small Teams – Affordable for teams under 50 users⚠️ Large Team Costs – 100 users = $3,000/month, can scale expensivelyUnlimited Content – Document/token limits not published, gated only by user seatsFree Trial + Enterprise – Hands-on trial available, custom pricing for large deployments

Standard: $99/mo – 60M words, 10 botsPremium: $449/mo – 300M words, 100 botsAuto-scaling – Managed cloud scales with demandFlat rates – No per-query charges

ISO 27001/27018, SOC 2 – Plus HIPAA-compatible deployments availablePermission-Aware – Granular access controls, users see only authorized contentPrivate Cloud/On-Prem – Deployment options for strict data-residency requirements

✅ GDPR Compliance – Germany-based, implicit EU data protection and regional sovereignty✅ Enterprise Privacy – Data isolated per customer, encrypted in transit and rest✅ No Model Training – Customer data not used for external LLM training✅ Role-Based Access – Built-in controls, admins set document visibility per role⚠️ Limited Certifications – On-prem or SOC 2/ISO 27001/HIPAA not publicly documented

SOC 2 Type II + GDPR – Third-party audited complianceEncryption – 256-bit AES at rest, SSL/TLS in transitAccess controls – RBAC, 2FA, SSO, domain allowlistingData isolation – Never trains on your data

Observability & Monitoring

Analytics Dashboard – Tracks query volume, engagement, generative-answer performancePipeline Logs – Exportable for deeper analysis and troubleshootingA/B Testing – Query pipeline experiments to measure impact, fine-tune relevance

Basic Stats – User activity, query counts, top-referenced documents for admins⚠️ No Deep Analytics – No conversation analytics dashboards or real-time loggingAdoption Tracking – Useful for usage monitoring, lighter insights than full suitesSet-and-Forget – Minimal monitoring overhead, contact support for issues

Real-time dashboard – Query volumes, token usage, response timesCustomer Intelligence – User behavior patterns, popular queries, knowledge gapsConversation analytics – Full transcripts, resolution rates, common questionsExport capabilities – API export to BI tools and data warehouses

Enterprise Support – Account managers, 24/7 help, extensive training programsPartner Network – Coveo Connect community with docs, forums, certified integrationsRegular Updates – Product releases and industry events for latest trends

✅ Direct Support – Email, phone, chat with hands-on onboarding approach⚠️ No Open Community – Closed solution, no plug-ins or user-built extensionsInternal Roadmap – Product updates from Pyx only, no community marketplaceQuick Setup Focus – Emphasizes minimal admin overhead for internal knowledge search

Comprehensive docs – Tutorials, cookbooks, API referencesEmail + in-app support – Under 24hr response timePremium support – Dedicated account managers for Premium/EnterpriseOpen-source SDK – Python SDK, Postman, GitHub examples5,000+ Zapier apps – CRMs, e-commerce, marketing integrations

Additional Considerations

Coveo goes beyond Q&A to power search, recommendations, and discovery for large digital experiences.

Deep integration with enterprise systems and strong permissioning make it ideal for internal knowledge management.

Feature-rich but best suited for organizations with an established IT team to tune and maintain it.

✅ No-Fuss Internal Search – Employees use without coding, simple deployment for teams⚠️ Not Public-Facing – Not ideal for customer chatbots or developer-heavy customizationSiloed Environment – Single AI search environment, not broad extensible platformSimpler Scope – Less flexible than CustomGPT, but faster setup for internal use

Time-to-value – 2-minute deployment vs weeks with DIYAlways current – Auto-updates to latest GPT modelsProven scale – 6,000+ organizations, millions of queriesMulti-LLM – OpenAI + Claude reduces vendor lock-in

No- Code Interface & Usability

Admin Console – Atomic components enable minimal-code starts⚠️ Developer Involvement – Full generative setup requires technical resources

Best For – Teams with existing IT resources, more complex than pure no-code

✅ Straightforward UI – Users log in, ask questions, get answers without coding✅ No-Code Admin – Admins connect data sources, Pyx indexes automaticallyMinimal Customization – UI stays consistent and uncluttered by designInternal Q&A Hub – Perfect for employee use, not external embedding or branding

2-minute deployment – Fastest time-to-value in the industryWizard interface – Step-by-step with visual previewsDrag-and-drop – Upload files, paste URLs, connect cloud storageIn-browser testing – Test before deploying to productionZero learning curve – Productive on day one

Market Position – Enterprise AI-powered search/discovery with RGA for large-scale knowledge managementTarget Customers – Large enterprises with complex content (SharePoint, Salesforce, ServiceNow, Confluence) needing permission-aware searchKey Competitors – Azure AI Search, Vectara.ai, Glean, Elastic Enterprise SearchCompetitive Advantages – 100+ connectors, hybrid search, permission-aware results, 99.999% uptime SLAPricing – Enterprise licensing higher than SaaS chatbots; best value for unified search across massive contentUse Case Fit – Knowledge hubs, support portals, commerce sites with generative answers

Market Position – Turnkey internal knowledge search (Germany), not embeddable chatbot platformTarget Customers – Small-mid European teams needing GDPR compliance and simple deploymentKey Competitors – Glean, Guru, Notion AI; not customer-facing chatbots like CustomGPT✅ Advantages – Simple scope, auto-sync, GDPR compliance, ~$30/user/month predictable pricing⚠️ Use Case Fit – Perfect for <50 user teams, not API integrations or public chatbots

Market position – Leading RAG platform balancing enterprise accuracy with no-code usability. Trusted by 6,000+ orgs including Adobe, MIT, Dropbox.Key differentiators – #1 benchmarked accuracy • 1,400+ formats • Full white-labeling included • Flat-rate pricingvs OpenAI – 10% lower hallucination, 13% higher accuracy, 34% fastervs Botsonic/Chatbase – More file formats, source citations, no hidden costsvs LangChain – Production-ready in 2 min vs weeks of development

Azure OpenAI GPT – Primary models via Azure OpenAI for high-quality generationModel Flexibility – Relevance-Augmented Passage Retrieval API supports custom LLMsAuto-Tuning – Handles model tuning, prompt optimization automatically; API override availableSearch Integration – LLM tightly integrated with keyword + semantic search pipeline

⚠️ Undisclosed LLM – Likely GPT-3.5/GPT-4 but model details not publicly documented⚠️ No Model Selection – Cannot switch LLMs or choose speed vs accuracy configurations⚠️ Opaque Architecture – Context window size and capabilities not exposed to usersSimplicity Focus – Hides technical complexity, users ask questions and get answers⚠️ No Fine-Tuning – Cannot customize model on domain data for specialized responses

OpenAI – GPT-5.1 (Optimal/Smart), GPT-4 seriesAnthropic – Claude 4.5 Opus/Sonnet (Enterprise)Auto-routing – Intelligent model selection for cost/performanceManaged – No API keys or fine-tuning required

RGA (Relevance Generative Answering) – Two-step retrieval + LLM producing source-cited answersHybrid Search – Keyword + semantic vector search for optimal LLM contextReranking & Smart Prompts – Keeps hallucinations low, citations precisePermission-Aware – SSO/LDAP integration shows only authorized content per userQuery Pipelines – Fine-tune sources, metadata, filters for retrieval control99.999% Uptime – Scalable architecture for heavy query loads, massive content sets

Conversational RAG – Context-aware search over enterprise documents with follow-up support✅ Auto-Sync – Repositories sync automatically, changes reflected almost instantlyDocument Formats – PDF, DOCX, PPT, TXT and common enterprise formats supported⚠️ No Advanced Controls – Chunking, embedding models, similarity thresholds not exposed⚠️ Limited Transparency – No citation metrics or anti-hallucination details publishedClosed System – Optimized for internal Q&A, limited visibility into retrieval architecture

GPT-4 + RAG – Outperforms OpenAI in independent benchmarksAnti-hallucination – Responses grounded in your content onlyAutomatic citations – Clickable source links in every responseSub-second latency – Optimized vector search and cachingScale to 300M words – No performance degradation at scale

Industries – Financial Services, Telecommunications, High-Tech, Retail, Healthcare, ManufacturingInternal Knowledge – Enterprise systems integration, permissioning for documentation, knowledge hubsCustomer Support – Support hubs with generative answers from knowledge bases, ticket historyCommerce Sites – Product search, recommendations, AI-powered discovery featuresContent Scale – Large distributed content across SharePoint, databases, file shares, Confluence, ServiceNowTeam Sizes – Large enterprises with IT teams, millions of queries

✅ Internal Knowledge Search – Employees asking questions about company documents and policies✅ Team Onboarding – New hires finding information without bothering colleagues✅ Policy Lookup – HR, compliance, operational procedure retrieval for staff✅ Small European Teams – GDPR-compliant internal search with EU data residency⚠️ NOT SUITABLE FOR – Public chatbots, customer support, API integrations, multi-channel deployment

Customer support – 24/7 AI handling common queries with citationsInternal knowledge – HR policies, onboarding, technical docsSales enablement – Product info, lead qualification, educationDocumentation – Help centers, FAQs with auto-crawlingE-commerce – Product recommendations, order assistance

ISO 27001/27018, SOC 2 – International security, privacy standards for enterprisesHIPAA-Compatible – Deployments available for healthcare compliance requirementsGranular Access Controls – Permission-aware search, SSO/LDAP integrationPrivate Cloud/On-Prem – Options for strict data-residency requirements99.999% Uptime SLA – Regional data centers for mission-critical infrastructure

✅ GDPR Compliance – Germany-based with implicit EU data protection compliance✅ German Data Residency – EU storage location for regional data sovereignty requirements✅ Enterprise Privacy – Customer data isolated, encrypted in transit and at rest✅ Role-Based Access – Built-in controls, admins set document visibility per user⚠️ Limited Certifications – SOC 2, ISO 27001, HIPAA not publicly documented

SOC 2 Type II + GDPR – Regular third-party audits, full EU compliance256-bit AES encryption – Data at rest; SSL/TLS in transitSSO + 2FA + RBAC – Enterprise access controls with role-based permissionsData isolation – Never trains on customer dataDomain allowlisting – Restrict chatbot to approved domains

Enterprise Licensing – $600 to $1,320 depending on sources, query volume, featuresPro Plan – Entry-level with core search, RGA for smaller enterprisesEnterprise Plan – Full-featured with advanced capabilities, higher volumes, premium supportAnnual Contracts – Volume tiers with optional premium support packages⚠️ Consumption-Based – Pricing model can make costs hard to predict

Best Value For – Unified search across massive content, millions of queries

Seat-Based Pricing – ~$30 per user per month✅ Small Team Value – Affordable for teams under 50 users, predictable costs⚠️ Scalability Cost – 100 users = $3,000/month, expensive for large organizationsUnlimited Content – No published document limits, gated only by user seatsFree Trial + Enterprise – Evaluation available, custom pricing for volume discounts

Standard: $99/mo – 10 chatbots, 60M words, 5K items/botPremium: $449/mo – 100 chatbots, 300M words, 20K items/botEnterprise: Custom – SSO, dedicated support, custom SLAs7-day free trial – Full Standard access, no chargesFlat-rate pricing – No per-query charges, no hidden costs

Enterprise Support – Account managers, 24/7 help, guaranteed response timesPartner Network – Certified integrations via Coveo Connect communityDocumentation – Step-by-step guides for pipelines, index management, connectorsTraining Programs – Admin console, Atomic components, developer integrationRegular Updates – Product releases, industry events for latest trends

✅ Direct Support – Email, phone, chat with hands-on onboarding approach✅ Quick Deployment – Minimal admin overhead, connect sources and start asking questions⚠️ No Open Community – Closed solution, no plug-ins or user extensions⚠️ No Developer Docs – No API documentation or programmatic access guidesInternal Roadmap – Updates from Pyx only, no user-contributed features

Documentation hub – Docs, tutorials, API referencesSupport channels – Email, in-app chat, dedicated managers (Premium+)Open-source – Python SDK, Postman, GitHub examplesCommunity – User community + 5,000 Zapier integrations

Limitations & Considerations

⚠️ Developer Involvement – Full generative setup requires technical resources

⚠️ Cost Predictability – Consumption-based pricing hard to predict for enterprise scale

⚠️ IT Team Needed – Best for organizations with established technical teams

⚠️ Enterprise Focus – Optimized for enterprises vs. SMBs or startups

NOT Ideal For – Small businesses, plug-and-play chatbot needs, immediate no-code deployment

⚠️ No Public API – Cannot embed or call programmatically, standalone UI only⚠️ No Messaging Integrations – No Slack, Teams, WhatsApp or chat platform connectors⚠️ Limited Branding – Minimal customization, not white-label solution for public deployment⚠️ No Advanced Controls – Cannot configure RAG parameters, model selection, retrieval strategies⚠️ Seat-Based Scaling – Expensive for large orgs vs usage-based pricing models✅ Best For – Small European teams (<50 users) prioritizing simplicity and GDPR over flexibility

Managed service – Less control over RAG pipeline vs build-your-ownModel selection – OpenAI + Anthropic only; no Cohere, AI21, open-sourceReal-time data – Requires re-indexing; not ideal for live inventory/pricesEnterprise features – Custom SSO only on Enterprise plan

Agentic AI Integration – Coveo for Agentforce, expanded API suite, Design Partner Program (2024-2025)Agent API Suite – Search API, Passage Retrieval API, Answer API for grounding agentsSalesforce Agentforce – Native integration for customer service, sales, marketing agentsAWS RAG-as-a-Service – MCP Server for Amazon Bedrock AgentCore, Agents, Quick Suite (Dec 2024)Four Tools – Passage Retrieval, Answer gen (Amazon Nova), Search, FetchSecurity-First – Inherits document/item-level permissions automatically for trusted answers

⚠️ NO Agent Capabilities – No autonomous agents, tool calling, or multi-agent orchestrationConversational Search Only – Context-aware dialogue for Q&A, not agentic or autonomous behaviorBasic RAG Architecture – Standard retrieval without function calling, tool use, or workflows⚠️ No External Actions – Cannot invoke APIs, execute code, query databases, or interact externallyInternal Knowledge Focus – Employee Q&A about documents, not task automation or workflows

Custom AI Agents – Autonomous GPT-4/Claude agents for business tasksMulti-Agent Systems – Specialized agents for support, sales, knowledgeMemory & Context – Persistent conversation history across sessionsTool Integration – Webhooks + 5,000 Zapier apps for automationContinuous Learning – Auto re-indexing without manual retraining

R A G-as-a- Service Assessment

Platform Type – Enterprise search with RAG-as-a-Service, Relevance Generative Answering (RGA)RAG Launch – AWS RAG-as-a-Service announced December 1, 2024 as cloud-native offering40% Accuracy Improvement – RAG increases base model accuracy according to industry studiesHybrid Search – Keyword, vector, hybrid search with relevance tuning100+ Connectors – SharePoint, Salesforce, ServiceNow, Confluence, databases, SlackBest For – Enterprises with distributed content needing permission-aware search, knowledge hubs, generative answers

⚠️ NOT TRUE RAG-AS-A-SERVICE – Standalone internal app, not API-accessible RAG platformTurnkey Application – Self-contained Q&A tool vs developer-accessible RAG infrastructure⚠️ No API Access – No REST API, SDKs, programmatic access unlike CustomGPT/VectaraClosed Application – Web/desktop interface only, cannot build custom applications on topSaaS vs RaaS – Software-as-a-Service (standalone app) NOT Retrieval-as-a-Service (API infrastructure)Best Comparison Category – Internal search tools (Glean, Guru), not developer RAG platforms

Platform type – TRUE RAG-AS-A-SERVICE with managed infrastructureAPI-first – REST API, Python SDK, OpenAI compatibility, MCP ServerNo-code option – 2-minute wizard deployment for non-developersHybrid positioning – Serves both dev teams (APIs) and business users (no-code)Enterprise ready – SOC 2 Type II, GDPR, WCAG 2.0, flat-rate pricing

Join the Discussion

Loading comments...